By Marjorie Greene

Introduction

At a recent Berkshire Hathaway shareholder meeting Warren Buffett said that Artificial Intelligence – the collection of technologies that enable machines to learn on their own – could be “enormously disruptive” to our human society. More recently, Stephen Hawking, the renowned physicist, predicted that planet Earth will only survive for the next one hundred years. He believes that because of the development of Artificial Intelligence, machines may no longer simply augment human activities but will replace and eliminate humans altogether in the command and control of cognitive tasks.

In my recent presentation to the annual Human Systems conference in Springfield, Virginia, I suggested that there is a risk that human decision-making may no longer be involved in the use of lethal force as we capitalize on the military applications of Artificial Intelligence to enhance war-fighting capabilities. Humans should never relinquish control of decisions regarding the employment of lethal force. How do we keep humans in the loop? This is an area of human systems research that will be important to undertake in the future.

Self-Organization

Norbert Wiener in his book, Cybernetics, was perhaps the first person to discuss the notion of “machine-learning.” Building on the behavioral models of animal cultures such as ant colonies and the flocking of birds, he describes a process called “self-organization” by which humans – and by analogy – machines learn by adapting to their environment. Self-organization refers to the emergence of higher-level properties of the whole that are not possessed by any of the individual parts making up the whole. The parts act locally on local information and global order emerges without any need for external control. The expression “swarm intelligence” is often used to describe the collective behavior of self-organized systems that allows the emergence of “intelligent” global behavior unknown to the individual systems.

Swarm Warfare

Military researchers are especially concerned about recent breakthroughs in swarm intelligence that could enable “swarm warfare” for asymmetric assaults against major U.S. weapons platforms, such as aircraft carriers. The accelerating speed of computer processing, along with rapid improvements in the development of autonomy-increasing algorithms also suggests that it may be possible for the military to more quickly perform a wider range of functions without needing every individual task controlled by humans.

Drones like the Predator and Reaper are still piloted vehicles, with humans controlling what the camera looks at, where the drone flies, and what targets to hit with the drone’s missiles. But CNA studies have shown that drone strikes in Afghanistan caused 10 times the number of civilian casualties compared to strikes by manned aircraft. And a recent book published jointly with the Marine Corps University Press builds on CNA studies in national security, legitimacy, and civilian casualties to conclude that it will be important to consider International Humanitarian Law (IHL) in rethinking the drone war as Artificial Intelligence continues to flourish.

The Chinese Approach

Meanwhile, many Chinese strategists recognize the trend towards unmanned and autonomous warfare and intend to capitalize upon it. The PLA has incorporated a range of unmanned aerial vehicles into its force structure throughout all of its services. The PLA Air Force and PLA Navy have also started to introduce more advanced multi-mission unmanned aerial vehicles. It is clear that China is intensifying the military applications of Artificial Intelligence and, as we heard at a recent hearing by the Senate’s U.S. – China Economic and Security Review Commission (where CNA’s China Studies Division also testified), the Chinese defense industry has made significant progress in its research and development of a range of cutting-edge unmanned systems, including those with swarming capabilities. China is also viewing outer space as a new domain that it must fight for and seize if it is to win future wars.

Armed with artificial intelligence capabilities, China has moved beyond just technology developments to laying the groundwork for operational and command and control concepts to govern their use. These developments have important consequences for the U.S. military and suggest that Artificial Intelligence plays a prominent role in China’s overall efforts to establish an effective military capable of winning wars through an asymmetric strategy directed at critical military platforms.

Human-Machine Teaming

Human-machine teaming is gaining importance in national security affairs, as evidenced by a recent defense unmanned systems summit conducted internally by DoD and DHS in which many of the speakers explicitly referred to efforts to develop greater unmanned capabilities that intermix with manned capabilities and future systems.

Examples include: Michael Novak, Acting Director of the Unmanned Systems Directorate, N99, who spoke of optimizing human-machine teaming to multiply capabilities and reinforce trust (incidentally, the decision was made to phase out N99 because unmanned capabilities are being “mainstreamed” across the force); Bindu Nair, the Deputy Director, Human Systems, Training & Biosystems Directorate, OASD, who emphasized efforts to develop greater unmanned capabilities that intermix with manned capabilities and future systems; and Kris Kearns, representing the Air Force Research Lab, who discussed current efforts to mature and update autonomous technologies and manned-unmanned teaming.

DARPA

Finally, it should be noted that the Defense Advanced Projects Agency (DARPA) has recently issued a relevant Broad Agency Announcement (BAA) titled “OFFensive Swarm-Enabled Tactics” – as part of the Defense Department OFFSET initiative. Notably, it includes a section asking for the development of tactics that look at collaboration between human systems and the swarm, especially for urban environments. This should certainly reassure the human systems community that future researchers will not forget them, even as swarm intelligence makes it possible to achieve global order without any need for external control.

Conclusion

As we capitalize on the military applications of Artificial Intelligence, there is a risk that human decision-making may no longer be involved in the use of lethal force. In general, Artificial Intelligence could indeed be disruptive to our human society by replacing the need for human control, but machines do not have to replace humans in the command and control of cognitive tasks, particularly in military contexts. We need to figure out how to keep humans in the loop. This area of research would be a fruitful one for the human systems community to undertake in the future.

Marjorie Greene is a Research Analyst with the Center for Naval Analyses. She has more than 25 years’ management experience in both government and commercial organizations and has recently specialized in finding S&T solutions for the U. S. Marine Corps. She earned a B.S. in mathematics from Creighton University, an M.A. in mathematics from the University of Nebraska, and completed her Ph.D. course work in Operations Research from The Johns Hopkins University. The views expressed here are her own.

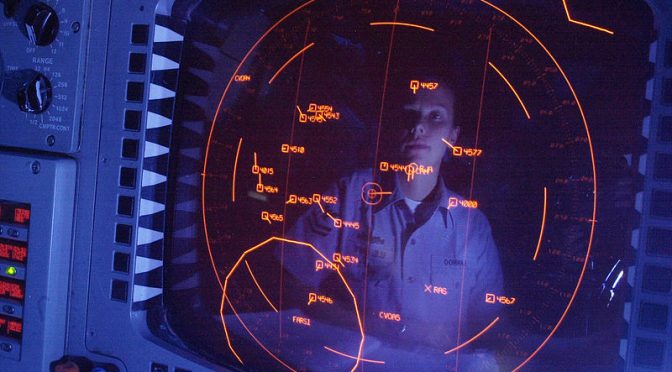

Featured Image: Electronic Warfare Specialist 2nd Class Sarah Lanoo from South Bend, Ind., operates a Naval Tactical Data System (NTDS) console in the Combat Direction Center (CDC) aboard USS Abraham Lincoln. (U.S. Navy photo by Photographer’s Mate 3rd Class Patricia Totemeier)

Discover more from Center for International Maritime Security

Subscribe to get the latest posts sent to your email.

Great article, thanks Dr. Greene!