By Jonathan Panter

On March 14, two Russian fighter jets intercepted a U.S. Air Force MQ-9 Reaper in international airspace, breaking one of the drone’s propellers and forcing it to crash into the Black Sea. The Russians probably understood that U.S. military retaliation – or, more importantly, escalation – was unlikely; wrecking a drone is not like killing people. Indeed, the incident contrasts sharply with the recent revelation of another aerial face-off. In late 2022, Russian aircraft nearly shot down a manned British RC-135 Rivet Joint surveillance aircraft. With respect to escalation, senior defense officials later indicated, the latter incident could have been severe.1

There is an emerging view among scholars and policymakers that unmanned aerial vehicles can reduce the risk of escalation, by providing an off-ramp during crisis incidents that, were human beings involved, might otherwise spark public calls for retaliation. Other recent events, such as the Iranian shoot-down of a U.S. RQ-4 Global Hawk in the Persian Gulf in 2019 – which likewise did not spur U.S. military kinetic retaliation – lend credence to this view. But in another theater, the Indo-Pacific, the outlook for unmanned escalation dynamics is uncertain, and potentially much worse. There, unmanned (and soon, autonomous) military competition will occur not just between aircraft, but between vessels on and below the ocean.

Over the past two decades, China has substantially enlarged its navy and irregular maritime forces. It has deployed these forces to patrol its excessive maritime claims and to threaten Taiwan, expanded its nuclear arsenal, and built a conventional anti-access, area-denial capacity whose overlap with its nuclear deterrence architecture remains unclear. Unmanned and autonomous maritime systems add a great unknown variable to this mix. Unmanned ships and submarines may strengthen capabilities in ways not currently anticipated; introduce unexpected vulnerabilities across entire warfare areas; lower the threshold for escalatory acts; or complicate each side’s ability to make credible threats and assurances.

Forecasting Escalation Dynamics

Escalation is a transition from peace to war, or an increase in the severity of an ongoing conflict. Many naval officers assume that unmanned ships are inherently de-escalatory assets due to their lack of personnel onboard. Recent high-profile incidents – such as the MQ-9 Reaper and RQ-4 Global Hawk incidents mentioned previously – seem, at first glance, to confirm this assumption. The logic is simple: if one side destroys the other’s unmanned asset, the victim will feel less compelled to respond, since no lives were lost.

While enticing, this assumption is also illusory. First, the example is of limited applicability: most unmanned ships and submarines under development will not be deployed independently. They will work in tandem with each other and with manned assets, such that the compromise of one vessel – potentially by cyber means – often affects others, changing a force’s overall readiness. The most serious escalation risk thus lies at a systemic, or fleetwide, level – not at the level of individual shoot-downs.

Second, lessons about escalation from two decades of operational employment of unmanned aircraft cannot be imported, wholesale, to the surface and subsurface domains – where there is little to no operational record of unmanned vessel employment. The technology, operating environments, expected threats, tactics, and other factors differ substantially.

Our understanding of one variant of escalation, that in the nuclear realm, is famously theoretical – the result of deductive logic, modeling, or gaming – rather than empirical, since nuclear weapons have only been used once in conflict, and never between two nuclear powers. Right now, the story is similar for unmanned surface and subsurface vessels. Neither side has deployed unmanned vessels at in sufficient numbers or duration, and across a great enough variety of contexts, for researchers to draw evidence-based conclusions. Everything remains a projection.

Fortunately, three existing areas of academic scholarship – crisis bargaining, inadvertent nuclear escalation, and escalation in cyberspace – provide some clues about what naval escalation in an unmanned context might look like.

Crisis Bargaining

During international crises, a state may try to convince its opponent that it is willing to fight over an issue – and that, if war were to break out, it would prevail. The goal is to get what you want without actually fighting. To intimidate an opponent, a state might inflate its capabilities or hide its weaknesses. To convince others of its willingness to fight, a state might take actions that create a risk of war, such as mobilizing troops (so-called “costly signals”). Ascertaining capability and intent in international crises is therefore quite difficult, and misjudging either may lead to war.2

Between nuclear-armed states, these phenomena are more severe. Neither side wants nuclear war, nor believes that the other is willing to risk it. To make threats credible, therefore, states may initiate an unstable situation (“rock the boat”) but then “tie their own hands” so that catastrophe can be averted only if the opponent backs down. States do this by, for example, automating decision-making, or stationing troops in harm’s way.3

The proliferation of unmanned and autonomous vessels promises to impact all of these crisis bargaining strategies. First – as noted previously – unmanned vessels may be perceived as “less escalatory,” since deploying them does not risk sailors’ lives. But this perception could have the opposite effect, if states – believing the escalation risk to be lower – deploy their unmanned vessels closer to an adversary’s territory or defensive systems. The adversary might, in turn, believe that his opponent is preparing the battlespace, or even that an attack is imminent. Economists call this paradox “moral hazard.” The classic example is an insured person’s willingness to take on more risk.

Second, a truly autonomous platform – one lacking a means of being recalled or otherwise controlled after its deployment – would be ideal for “tying hands” strategies. A state could send such vessels to run a blockade, for instance, daring the other side to fire first. Conversely, an unmanned (but not autonomous) vessel might have remote human operators, giving a state some leeway to back down after “rocking the boat.” In a crisis, it may be difficult for an adversary to distinguish between the two types of vessels.

A further complication arises if a state misrepresents a recallable vessel as non-recallable, perhaps to gain the negotiating leverage of “tying hands,” while maintaining a secret exit option. And even if an autonomous vessel is positively identified as such, attributing “intent” to it is a gray area. The more autonomously a vessel operates, the easier it is to attribute its behaviors to its programming, but the harder it is to determine whether its actions in a specific scenario are intended by humans (versus being default actions or error).

Unmanned Aerial Vehicles?

Scholars have begun to address such questions by studying unmanned aerial systems.4 To give two recent examples, one finding suggests that unmanned aircraft may be de-escalatory assets, since the absence of a pilot means domestic publics would be less likely to demand blood-for-blood if a drone gets shot down.5 Another scholar finds that because drones combine persistent surveillance with precision strike, they can “increase the certainty of punishment” – making threats more credible.6

Caution should be taken in applying such lessons to the maritime realm. First, unmanned ships and submarines are decades behind unmanned aerial vehicles in sophistication. Accordingly, current plans point to a (potentially decades-long) roll-out period during which unmanned vessels will be partially or optionally manned.7 Such vessels could appear unmanned to an adversary, when in fact crews are simply not visible. This complicates rules of engagement, and warps expectations for retaliation if a state targets an apparently-unmanned vessel that in fact has a skeleton crew.

Second, ships and submarines have much longer endurance times than aircraft. Hence, mechanical and software problems will receive less frequent local troubleshooting and digital forensic examination. An aerial drone that suffers an attempted hack will return to base within a few hours; not so with unmanned ships and submarines because their transit and on-station times are much longer, especially those dispersed across a wide geographic area for distributed maritime operations. This complicates efforts to attribute failures to “benign” causes or adversarial compromise. The question may not be whether an attempted attack merits a response due to loss of life, but rather whether it represents the opening salvo in a conflict.

Finally, with regard to the combination of persistent surveillance and precision strike, most unmanned maritime systems in advanced stages of development for the U.S. Navy do not combine sensing and shooting. Small- and medium-sized surface craft, for instance, are much closer to deployment than the U.S. Navy’s “Large Unmanned Surface Vessel,” which is envisioned as an adjunct missile magazine. The small- and medium-sized craft are expected to be scouts, minesweepers, and distributed sensors. Accordingly, they do little for communicating credible threats, but do present attractive targets for a first mover in a conflict, whose opening goal would be to blind the adversary.

Inadvertent Nuclear Escalation

During conventional war, even if adversaries carefully avoid targeting the other side’s nuclear weapons, other parts of a military’s nuclear deterrent may be dual-use systems. An attack on an enemy’s command-and-control, early warning systems, attack submarines, or the like – even one conducted purely for conventional objectives – could make the target state fear that its nuclear deterrent is in danger of being rendered vulnerable.8 This fear could encourage a state to launch its nuclear weapons before it is too late. Incremental improvements to targeting and sensing in the past two decades – especially in the underwater realm – have exacerbated the problem by making retaliatory assets easier to find and destroy.9

In the naval context, the risk is that one side may perceive a “use it or lose it” scenario if it feels that its ballistic missile submarines have all been (or are close to being) located. In particular, the ever-wider deployment of assets that render the underwater battlespace more transparent – such as small, long-duration underwater vehicles equipped with sonar – could undermine an adversary’s second-strike capability. Today, the US Navy’s primary anti-submarine platforms aggregate organic sensing and offensive capabilities (surface combatants, attack submarines, and maritime patrol aircraft). The shift to distributed maritime operations using unmanned platforms, however, portends a future of disaggregated capabilities. Small platforms without onboard weapons systems will still provide remote sensing capability to the joint force. If these sensing platforms are considered non-escalatory because they lack offensive capabilities and sailors onboard, the US Navy might deploy them more widely.10

Escalation in Cyberspace

The US government’s shift to persistent engagement in cyberspace, a strategy called “Defend Forward,”11 has underscored two debates on cyber escalation. The first concerns whether operations in the cyber domain expose previously secure adversarial capabilities to disruption, shifting incentives for preemption on either side.12 The second concerns whether effects generated by cyberattacks (i.e., cyber effects or physical effects) can trigger a “cross-domain” response.13

These debates remain unresolved. Narrowing the focus to cyberattacks on unmanned or autonomous vessels presents an additional challenge for analysis, because these technologies are nascent and efforts to ensure their cyber resilience remain classified. Platforms without crews may present an attractive cyber target, perhaps because interfering with the operation of an unmanned vessel is perceived as less escalatory since human life is not directly at risk.

But a distinction must be made between the compromise of a single vessel and its follow-on effects at a system, or fleetwide level. Based on current plans, unmanned vessels are most likely to be employed as part of an extended, networked hybrid fleet. If penetrating one unmanned vessel’s cyber defenses can allow an adversary to move laterally across a network, this “effect” may be severe, potentially affecting a whole mission or warfare area. The subsequent decline in offensive or defensive capacity at the operational level of war could shift incentives for preemption. Since unmanned vessels operating as part of a team (with other unmanned vessels or with manned ones) are dependent on beyond-line-of-sight communications, interruption of one of these pathways (e.g., disabling a geostationary satellite over the area of operations) could have a similar systemic effect.

The Role of Human Judgment

Modern naval operations already depend on automated combat systems, lists of “if-then” statements, and data links. For decades, people have increasingly assigned mundane and repetitive (or computationally laborious) shipboard tasks to computers, leaving officers and sailors in a supervisory role. This state of affairs is accelerating with the introduction of unmanned and autonomous vessels, especially when combined with artificial intelligence. These technologies are likely to make human judgment more, not less, important.14 Many future naval officers will be designers, regulators, or managers of automated systems. So too will civilian policymakers directing the use of unmanned and autonomous maritime systems to signal capability and intent in crisis. For both policymakers and officers, questions requiring substantial judgment will include:

The “moral hazard” problem. If unmanned vessels are perceived as less escalatory – because they lack crews, or because they carry only sensors and no offensive capabilities – are they more likely to be employed in ways that incur other risks (such as threatening adversary defensive or nuclear deterrent capabilities in peacetime)?

The autonomy/intent paradox. When will an autonomous vessel’s action be considered a signal of an adversary’s intent (since the adversary designed and coded the vessel to act a certain way), versus an action that the vessel “decided” to take on its own? If an adversary claims ignorance – that he did not intend an autonomous vessel to act a certain way – when will he be taken at his word?15

The attribution problem. Since unmanned vessels have no crews, local troubleshooting of equipment – along with digital forensics – will occur less frequently than it does on manned vessels. Remotely attributing a problem to routine component or software failure, versus to adversarial cyberattack, will often be harder than it would be with physical access. Will there have to be a higher “certainty threshold” for positive attribution of an attack on an unmanned vessel?

The “roll-out” uncertainty. How will the first few decades of hybrid fleet operations (utilizing partial and optional-manning constructs) complicate the decision to target or compromise unmanned vessels? If a vessel appears unmanned, but has an unseen skeleton crew – and then suffers an attack – how should the target state assess the attacker’s claim of ignorance about the presence of personnel onboard?

The cyber problem. Do unmanned systems’ attractiveness as a cyber target (due to their absence of personnel, often highly-networked employment) present a system-wide vulnerability to those warfare areas than lean more heavily on unmanned systems than others? Which warfare areas would have to be affected to change incentives for preemption?

Since unmanned vessels have not yet been broadly integrated into fleet operations, these questions have no definitive, evidence-based answers. But they can help frame the problem. The maritime domain in East Asia is already particularly susceptible to escalation. Interactions between potential foes should, ideally, never escalate without the consent and direction of policymakers. But in practice, interactions-at-sea can escalate due to hyper-local misperceptions, influenced by factors like command, control, and communications, situational awareness, or relative capabilities. All of these factors are changing with the advent of unmanned and autonomous platforms. Escalation in this context cannot be an afterthought.

Jonathan Panter is a Ph.D. candidate in Political Science at Columbia University. His research examines Congressional oversight over U.S. naval operations. Prior to attending Columbia, Mr. Panter served as a Surface Warfare Officer in the United States Navy. He holds an M.Phil. and M.A. in Political Science from Columbia, and a B.A. in Government from Cornell University.

The author thanks Johnathan Falcone, Anand Jantzen, Jenny Jun, Shuxian Luo, and Ian Sundstrom for comments on earlier drafts of this article.

References

1. Thomas Gibbons-Neff and Eric Schmitt, “Miscommunication Nearly Led to Russian Jet Shooting Down British Spy Plane, U.S. Officials Say,” New York Times, April 12, 2023, https://www.nytimes.com/2023/04/12/world/europe/russian-jet-british-spy-plane.html.

2. James D. Fearon, “Rationalist Explanations for War,” International Organization 49, no. 3 (Summer 1995): 379-414.

3. Thomas C. Schelling, Arms and Influence (New Haven: Yale University Press, [1966] 2008), 43-48, 99-107.

4. See, e.g., Michael C. Horowitz, Sarah E. Kreps, and Matthew Fuhrmann, “Separating Fact from Fiction in the Debate over Drone Proliferation,” International Security 41, no. 2 (Fall 2016): 7-42.

5. Erik Lin-Greenberg, “Wargame of Drones: Remotely Piloted Aircraft and Crisis Escalation,” Journal of Conflict Resolution (2022). See also Erik Lin-Greenberg, “Game of Drones: What Experimental Wargames Reveal About Drones and Escalation,” War on the Rocks, January 10, 2019, https://warontherocks.com/2019/01/game-of-drones-what-experimental-wargames-reveal-about-drones-and-escalation/.

6. Amy Zegart, “Cheap flights, credible threats: The future of armed drones and coercion,” Journal of Strategic Studies 43, no. 1 (2020): 6-46.

7. Sam Lagrone, “Navy: Large USV Will Require Small Crews for the Next Several Years,” USNI News, August 3, 2021, https://news.usni.org/2021/08/03/navy-large-usv-will-require-small-crews-for-the-next-several-years.

8. Barry D. Posen, Inadvertent Escalation (Ithaca: Cornell University Press, 1991); James Acton, “Escalation through Entanglement: How the Vulnerability of Command-and-Control Systems Raises the Risks of an Inadvertent Nuclear War,” International Security 43, no. 1 (Summer 2018): 56-99. For applications to contemporary Sino-US security competition, see: Caitlin Talmadge, “Would China Go Nuclear? Assessing the Risk of Chinese Nuclear Escalation in a Conventional War with the United States,” International Security 41, no. 4 (Spring 2017): 50-92; Fiona S. Cunningham and M. Taylor Fravel, “Dangerous Confidence? Chinese Views on Nuclear Escalation,” International Security 44, no. 2 (Fall 2019): 61-109; and Wu Riqiang, “Assessing China-U.S. Inadvertent Nuclear Escalation,” International Security 46, no. 3 (Winter 2021/2022): 128-162.

9. Keir A. Lieber and Daryl G. Press, “The New Era of Counterforce,” International Security 41, no. 4 (Spring 2017): 9-49; Rose Goettemoeller, “The Standstill Conundrum: The Advent of Second-Strike Vulnerability and Options to Address It,” Texas National Security Review 4, no. 4 (Fall 2021): 115-124.

10. Jonathan D. Caverley and Peter Dombrowski suggest that one component of crisis stability – the distinguishability of offensive and defensive weapons – is more difficult at sea because naval platforms are designed to perform multiple missions. From this perspective, disaggregating capabilities might improve offense-defense distinguishability and prove stabilizing, rather than escalatory. See: “Cruising for a Bruising: Maritime Competition in an Anti-Access Age.” Security Studies 29, no. 4 (2020): 680-681.

11. For an introduction to this strategy, see: Michael P. Fischerkeller and Robert J. Harknett, “Persistent Engagement, Agreed Competition, and Cyberspace Interaction Dynamics and Escalation,” Cyber Defense Review (2019), https://cyberdefensereview.army.mil/Portals/6/CDR-SE_S5-P3-Fischerkeller.pdf.

12. Erik Gartzke and John R. Lindsay, “Thermonuclear Cyberwar,” Journal of Cybersecurity 3, no. 1 (March 2017): 37-48; Erica D. Borghard and Shawn W. Lonergan, “Cyber Operations as Imperfect Tools of Escalation,” Strategic Studies Quarterly 13, no. 3 (Fall 2019): 122-145.

13. See, e.g., Sarah Kreps and Jacquelyn Schneider, “Escalation firebreaks in the cyber, conventional, and nuclear domains: moving beyond effects-based logics,” Journal of Cybersecurity 5, no. 1 (Fall 2019): 1-11; Jason Healey and Robert Jervis, “The Escalation Inversion and Other Oddities of Situational Cyber Stability,” Texas National Security Review 3, no. 4 (Fall 2020): 30-53.

14. Avi Goldfarb and John R. Lindsay, “Prediction and Judgment: Why Artificial Intelligence Increases the Importance of Humans in War,” International Security 46, no. 3 (Winter 2021/2022): 7-50.

15. The author thanks Tove Falk for this insight.

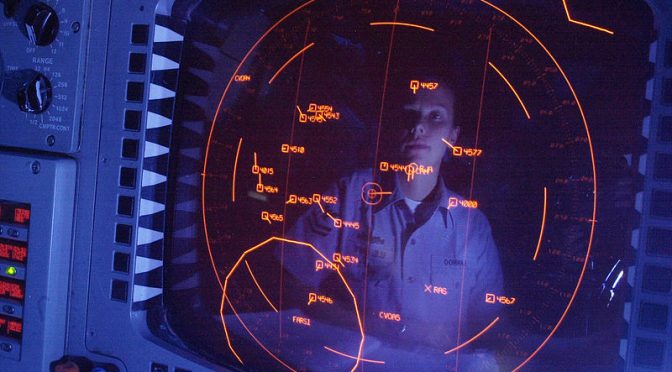

Featured Image: A medium displacement unmanned surface vessel and an MH-60R Sea Hawk helicopter from Helicopter Maritime Strike Squadron (HSM) 73 participate in U.S. Pacific Fleet’s Unmanned Systems Integrated Battle Problem (UxS IBP) April 21, 2021. (U.S. Navy photo by Chief Petty Officer Shannon Renf)